OVERVIEW

Constructing highly consistent sensor models with real phenomena based

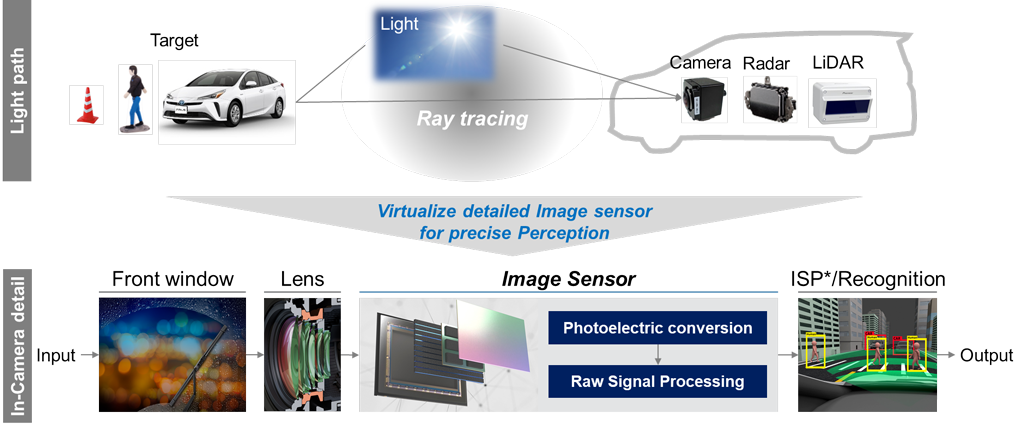

Unlike ordinary vehicle component models, sensors that recognize the environment conditions play a functional role in connecting driving environment models and automatic driving control. In general simulators, the main focus is on evaluating whether the system control works correctly, and many sensing models are based on so-called ground truth models, that is to say a functional model. As mentioned earlier, in order to guarantee the safety of automated driving vehicle, it is necessary to understand the strengths and weaknesses (limitations) of each surrounding monitoring sensors, and to improve the system design, sensors, and perceptual recognition algorithms. However, it is difficult to reflect the weaknesses of the sensors in the simulation models because the functional sensor model does not reflect the verification results of the spatial propagation of electromagnetic waves. In this paper, we present a spatial propagation model of the ray tracing system as a physical based modeling.

We have developed a spatial propagation model of the ray tracing system based on the reflection characteristics (retroreflection, diffusion, specular reflection, etc.) and transmission characteristics of the electromagnetic waves, visible light for camera, millimeter wave for Radar and near-infrared light for Lidar, and also captured the physical phenomena that change due to the influence of the surrounding environment such as rain, fog, and ambient illumination. The unique feature of this model is that it reflects the spatial propagation characteristics view from the sensors in a series of models based on the electromagnetic wave principle of “driving environment objects – electromagnetic wave propagations – sensors” as perception models (Fig. 3). Specific examples are shown below.

(1) Camera

The camera model simulates the spectral characteristics that are input to semiconductors such as CMOS, rather than the RGB is human eyes friendly. The sunlight is formulated as a sky model, which can simulate a precise sunlight source with the input of time, latitude and longitude.

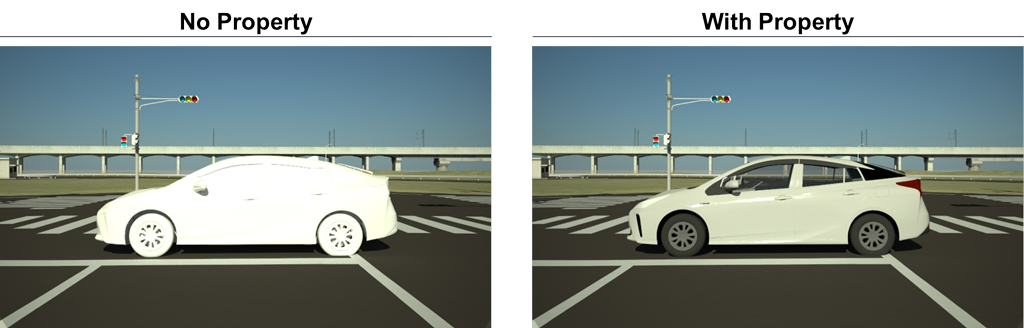

As shown in Fig. 4, the reflection characteristics are defined on the objects to create a realistic simulation image.

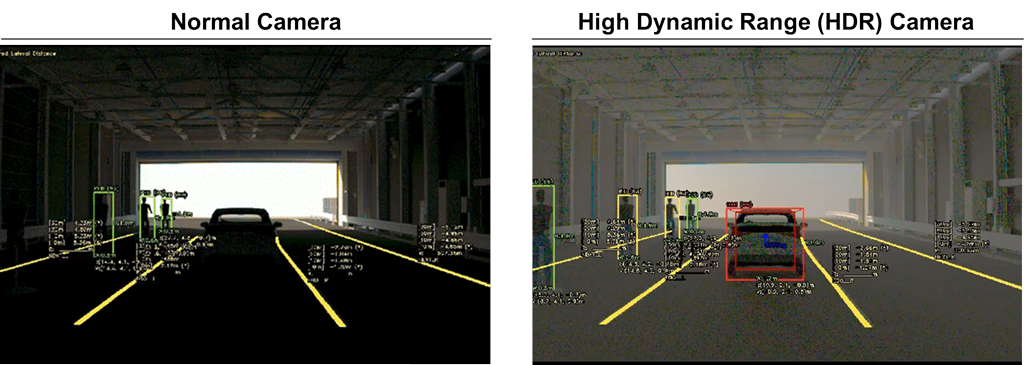

Fig. 5 also shows that the simulation verifies that the HDR (High Dynamic Range) camera model can provide sufficient visibility for recognition even in the dark condition in the tunnel, weak visible light with intense backlight at the approaching exit.

(2) Millimeter wave radar

Radar is the most difficult sensor for modeling. Three reflection models are defined and used according to the behavior of radio waves at reflective targets. The PO approximation (Physical Optics) is used as the scatterer model for small objects such as cars and people, while the Geometric Optics approximation is used for large objects such as buildings and road surfaces as the reflector model. In addition, RCS (Radar Cross-Section) model is used to shorten the analysis time, which is defined for each object in advance, and assign them to the objects in a combined scenario. Figure 6 shows a scene of a vehicle passing between 2-cars as an example of sensor weakness.It reproduces the sensing weakness scene that the low-resolution radar in azimuth does not provide perceptual output at the correct location and shows that this can be improved with higher resolution.

(3) Lidar

Laser light is a relatively easier to modeling due to its directivity feature. Figure 7 shows a 360° scanning Lidar model. It is possible to evaluate environmental disturbances such as background light.

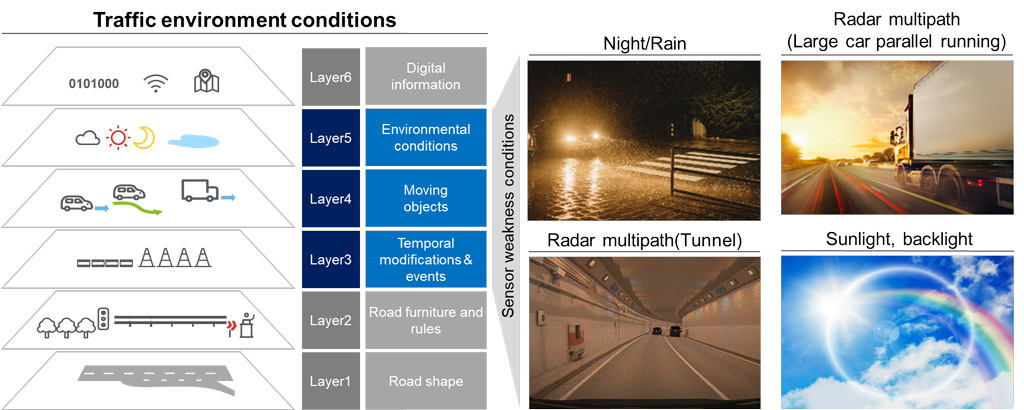

(4) Environmental model

The six-level classification of driving environment scenarios defined by Pegasus project in Europe. Sensing weakness validation is mostly rely on the environmental conditions and needs physical characteristics owned object models such as reflection, transmission, and attenuation of electromagnetic waves (Fig. 8, Fig. 9a, 9b, 9c). The skylight model, which models’ sunlight, is effective for evaluating sensing weakness scenarios such as backlight, because it can create a virtual space with good reproducibility of how the sunlight source hits the object by determining the time of day and the latitude and longitude of the object (Fig. 9a).