CONTRIBUTION TO SAFETY ASSURANCE

It is still an issue to what extent safety assessment scenarios should be conducted. However, if the conditions can be set in a virtual space with good reproducibility, the efficiency of validation will be greatly improved. In this project, we have set two milestones of scenario package: (1) assessment of NCAP, etc., and (2) validation of the actual traffic environment by modeling the demonstration community in Odaiba urban area and Tokyo metro C1 highway, enable to validate sensing weakness conditions. We are currently working on a series of “driving environment objects – electromagnetic wave propagations – sensors” perception models. It is necessary to define a virtual space as unit, which can be called a package scenario according to the purpose, and to improve the level of reliable safety assessment in this scenario package unit.

(1) Application to assessment validation such as NCAP

In the case of advanced safety systems with automated driving functions such as LKS (Lane Keeping Assis/ACC (Adaptive Cruise Control), ALKS (Automated Lane Keeping System), etc., validation protocols are defined in detail by Euro-NCAP, J-NCAP, etc. Although there are some differences depending on the traffic accident situation in each country, protocols that reflect the accident situation such as pedestrians, bicycles, right and left turns at intersections, and scenarios such as cut-in and cut-out in automated driving ALKS are important. In DIVP®, each assessment scenario is modeled sequentially. As an example, Fig. 10 shows a simulation of a pedestrian ejection scenario from the shade of a parked car with a sensor model. Such scenarios, which can be called “Virtual Proving Ground”, can be extended to system validations that consider the effects of the environment such as rain, fog, and westering sun in a virtual space.

(2) Application to the validation of the real environment “Odaiba and C1 highway

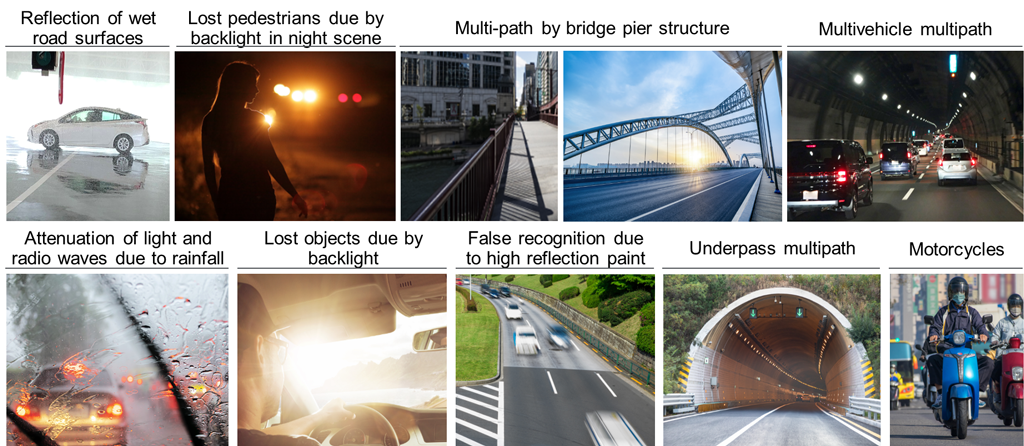

As the next scenario package, we are challenging to construct an environmental model of the Odaiba and C1 highway area, which is a mecca for automated vehicle demonstrations. In this virtual space, real environmental factors (driving environment, road, land, dynamic objects, weather, etc.) are combined to validate the sensor weakness scenario (Fig. 11). In collaboration with other SIP automated driving demonstration projects, the sensor recognition malfunction data (location, video, recognition output, etc.) generated in this realistic environment can be fed into the DIVP® simulator and sublimated into a virtual environment that can be evaluated by more users as Virtual Community Ground. For example, the road surface in front of the Odaiba Telecom Sensor was painted with a thermal barrier, and as a result, the reflection characteristics of the asphalt and the white line were similar, which made it difficult for Lidar to detect the white line, and this was reflected in the simulation model (Fig. 12). In this way, it is possible to collaborate with other automated driving projects, which is an effective framework unique to SIP-adus.

(at Daiba station of Odaima area)